Deploying a Kubernetes Cluster with orcharhino

This blog post is about an orcharhino feature called application-centric deployment (ACD), and describes the process of deploying a Kubernetes cluster with orcharhino. It allows administrators to create hosts based on application templates, and makes it easier to run multiple instances/versions of a complex application.

An example for that would be a Kubernetes cluster consisting of multiple hosts that have to work with each other. We used this as our internal demo application, and released it on GitHub. It is now showcased as part of this blog post.

You can use our ACD Plug-in to deploy applications in an automated way.

What is Application-Centric Deployment?

Deploying applications using orcharhino usually starts with creating and configuring the hosts that will serve the application.

With complex applications consisting of multiple hosts, this becomes pretty tedious, especially if you want to deploy the application multiple times.

You can redeploy an application if you want to apply certain changes, or for example use it in a production and a development environment.

To make the deployment easier, the common approach is to work with solutions like Ansible or Puppet.

A typical application deployment with Ansible consists of the following steps:

- Create hosts

- Provision hosts

- Create an inventory and group hosts

- Adjust group variables for the environment

- Run Ansible roles to deploy the application on the inventory

Notice that all these steps have to be repeated each time you want to deploy a new instance.

ou can use orcharhino with ACD as a much more efficient solution for this workflow.

With an existing Ansible Playbook and host groups, application deployments become as easy as adjusting variables and clicking Deploy.

Perform the following steps to deploy your application using ACD:

- One time only: import the Ansible Playbook used to deploy your application onto preprovisioned hosts.

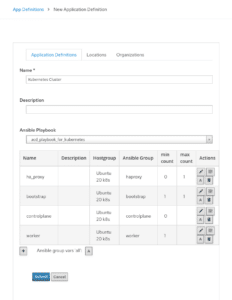

- One time only: create an Application Definition specifying the host groups and valid number of instances per service.

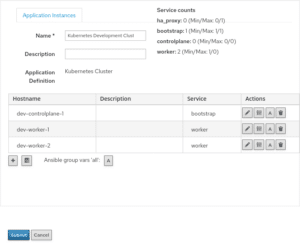

- Define an Application Instance.

- Optional: adjust group and host variables for that instance.

- Click Deploy.

orcharhino creates all required hosts and provisions them according to their host group.

When all hosts have been set up, an inventory will be generated and the Ansible Playbook is executed.

Deployment of a Kubernetes Cluster

Deploying a Kubernetes cluster, even with applications on top, is a prime example of where ACD excels. ATIX provides a playbook that deploys a Kubernetes cluster based on containerd with either Calico or Cilium for container networking.

Optionally, it configures an HAProxy node to provide a high-availability API server.

It provisions four categories of hosts:

-

Control plane hosts

-

You can specify the quantity you need.

-

These hosts will become control plane nodes in the Kubernetes cluster.

-

-

Bootstrap hosts

-

Exactly one host has to be a bootstrap host.

-

This host will be used to set up the cluster initially, and here you’ll also find the “kubeconfig” file.

-

In addition, this node will be provisioned like any other control plane node and be the initial master node.

-

-

Worker hosts

-

You can specify the quantity you need.

-

These hosts will join the cluster as worker nodes.

-

-

HAProxy hosts

-

Currently, there can be at most one HAProxy host.

-

This host will provide high availability for the Kubernetes API Server by proxying requests to the control plane nodes.

-

Optionally, depending on the configuration through group variables, the playbook can deploy a Kubernetes Dashboard.

The browser-based dashboard will provide you with information about your cluster.

As a first step, import the playbook that is used to deploy Kubernetes on the preprovisioned hosts.

When creating the Application Definition, reference the playbook and define the host groups to be provisioned by orcharhino.

To deploy the application, create an Application Instance, adjust settings, and click Submit to save.

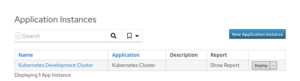

Now click Deploy and wait for your instance to be ready.

The future of deploying Kubernetes with ACD

At the time of writing, the project is still at an early stage. Therefore, a few features that should be present in most Kubernetes clusters are missing.

These include monitoring cluster health and aggregating logs.

While these tasks can currently be carried out manually, solutions could be deployed together with the cluster in the future.