Kubernetes kOps

The cloud and Kubernetes belong together, right? More or less, this is the case. Although containerized applications quickly find a home environment with cloud providers, for some myterious reasons, it’s not that easy to set up a Kubernetes environment in the cloud. Unless you use kOps, the tool written exactly for this purpose. This is how it works.

Introduction

Kubernetes is an open-source container orchestration platform that automates the deployment, scaling, and management of containerized applications. It provides a framework for building and deploying cloud-native applications, making it an ideal choice for organizations looking to modernize their application infrastructure.

Amazon Web Services (AWS) is one of the leading cloud providers and offers a number of services that can be used in combination with Kubernetes. In this article, we’ll explore how to get started with Kubernetes on AWS, including the steps for setting up a Kubernetes cluster and deploying applications.

Prerequisites

Before we dive into setting up Kubernetes on AWS, there are a few prerequisites to consider:

1. Familiarity with AWS: this guide assumes that you have a basic understanding of AWS services, such as EC2, S3, and VPCs.

2. Familiarity with Kubernetes: while this article covers the basics of setting up a Kubernetes cluster on AWS, it is recommended that you have some prior experience with Kubernetes.

3. Access to an AWS account: to follow along with this guide, you’ll need access to an AWS account.

How Does kOps Provision Infrastructure?

kOps provisions infrastructure using a combination of cloud provider APIs and Terraform modules. When deploying a Kubernetes cluster with kOps, the infrastructure that the cluster runs on needs to be provisioned first. How exactly does kOps provison infrastructure?

kOps uses the APIs provided by cloud providers like AWS, GCP, and Azure to create the required infrastructure components, such as VPCs, subnets, security groups, and load balancers. kOps also provides Terraform modules that can be used to provision infrastructure components required for running a Kubernetes cluster. Once the infrastructure components are provisioned, kOps allows for further customization of the Kubernetes cluster by providing a set of configuration options. These options can be used to configure the Kubernetes master and worker nodes, as well as any other resources required for running the cluster. After the infrastructure components and Kubernetes configuration options have been selected, kOps deploys the Kubernetes cluster. This involves setting up the Kubernetes master and worker nodes, installing any required software packages and tools, and configuring the Kubernetes API server, etcd, and other components. kOps validates the Kubernetes cluster by performing a set of tests to ensure that the cluster is functioning as expected.

Setting up a Kubernetes cluster on AWS

Kubernetes has become a popular choice for container orchestration, providing a scalable and reliable platform for managing containerized applications. Deploying a Kubernetes cluster on AWS can be a complex task, but there are several tools to simplify the process.

One of them is eksctl, which is a simple CLI tool specifically intended to bootstrap and manage clusters on EKS Amazon’s managed Kubernetes service for EC2. With EKS, Amazon will take responsibility for managing your Kubernetes master nodes (for an additional fee).

However, in this article we will be focusing on kOps, which is an open-source tool that automates the creation, management, and scaling of Kubernetes clusters on AWS. kOps will not only help you create, destroy, upgrade, and maintain production-grade, highly available Kubernetes clusters, but it will also provision the necessary cloud infrastructure.

Before we get started with KOPS, there are a few prerequisites:

0 Create AWS account with your own domain

1 Install kops

curl -LO https://github.com/kubernetes/kops/releases/download/$(curl -s https://api.github.com/repos/kubernetes/kops/releases/latest | grep tag_name | cut -d '"' -f 4)/kops-linux-amd64

chmod +x kops-linux-amd64

sudo mv kops-linux-amd64 /usr/local/bin/kops

2 Make sure you have kubectl installed on your machine

3 Create an S3 bucket: kOps requires an S3 bucket to store cluster state information. Create an S3 bucket in your AWS account and make a note of the bucket name.

4 Create an IAM user: kOps requires an IAM user with the appropriate permissions to create and manage resources in your AWS account. Create an IAM user with the necessary permissions and make a note of the access key and secret key.

The required permissions for kops IAM user are:

AmazonEC2FullAccess

AmazonRoute53FullAccess

AmazonS3FullAccess

IAMFullAccess

AmazonVPCFullAccess

AmazonSQSFullAccess

AmazonEventBridgeFullAccess

5 Proceed with creating your Kubernetes cluster

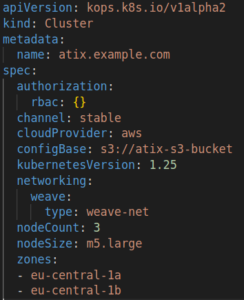

a) Declarative approch: use kOps to create a cluster configuration file that specifies the desired characteristics of your cluster, such as the number of nodes, instance type, and AWS region. Here’s an example configuration file:

Save this configuration file as example-cluster.yaml.

Create the cluster: use kOps to create the cluster by running the following command:

kops create -f example-cluster.yaml

This will create the necessary resources in your AWS account, such as EC2 instances and an Elastic Load Balancer.

Validate the cluster: use kOps to validate the cluster by running the following command:

kops validate cluster atix.example.com

… and wait ten minutes. This will ensure that the cluster is running and that all of the nodes are communicating with each other.

b) Imperative approach: use the kOps imperative command to do without the configuration file.

-

Cluster in single AWS availability zone:

kops create cluster --name=k8s-cluster.example.com

--state=s3://my-state-store

--zones=eu-central-1a,eu-central-1b

--node-count=2

-

Cluster with high-availability masters with private networking and bastion host:

export KOPS_STATE_STORE="s3://atix-kops-test"

export MASTER_SIZE="t3.medium"

export NODE_SIZE="t3.small"

export ZONES="eu-central-1a"

kops create cluster kops-test.atix-training.de

--node-count 3

--zones $ZONES

--node-size $NODE_SIZE

--master-size $MASTER_SIZE

--master-zones $ZONES

--networking cilium

--topology private

--bastion="true"

--yes

This command creates a Kubernetes cluster with two nodes in the eu-central-1a and eu-central-1b availability zones. The nodes are m5.large instances and the master is c5.large.

To check if the configuration file is correct, we can run:

kops edit cluster --name=kubernetes.example.com --state=s3://your-s3-bucket

This command updates the configuration stored in the S3 bucket and then creates the Kubernetes cluster. The –yes option confirms that you want to proceed with the operation.

To apply our generated configuration, we can run:

kops update cluster --name kops-test.atix-training.de --yes --admin --state=s3://atix-kops-test

To validate the cluster, we can run:

kops validate cluster --wait 10m --state=s3://atix-kops-test

Note that it can take up to 10 minutes for kOps to provision and configure everything needed for the cluster to work properly.

First, it will configure the master node and right after that the worker nodes.

6 Enjoy your cluster!

Conclusion

Deciding between kOps and other cluster management solutions depends on requirements, preferences, and expertise. kOps is popular due to its simplicity and robustness, particularly when managing clusters on AWS. It provides fine-grained control and customization options, allowing users to configure vartious aspects of the cluster.

On the other hand, there are alternative cluster management solutions that offer additional features and ease of use. Tools like kOps are often more suitable for more experienced Kubernetes administrators and teams who require full control and customization.

Additionally, there are other open-source cluster management solutions, such as Rancher, Kubeadm, etc. These tools provide varying levels of automation and flexibility, catering to different use cases and requirements.

So, at the end of the day, we can say that the choice between kOps and other cluster management solutions depends on factors like the level of control desired, expertise, infrastructure preferences, and available resources. However, alternative solutions, such as managed Kubernetes services or other open-source tools may be more suitable for those seeking simplified deployment, automated management, or a more user-friendly experience.

References

https://github.com/kubernetes/kops https://eksctl.io/

https://kubernetes.io/

https://docs.aws.amazon.com/ https://docs.aws.amazon.com/eks/latest/userguide/getting-started.html