The Tentacles of orcharhino – Deploying with Many Subnets

Manually and individually setting up or deploying servers is a tedious task. But wait a minute—there’s orcharhino, which automates just that! With our data center automation tool, hosts can be configured, provisioned and deployed at the touch of a button.

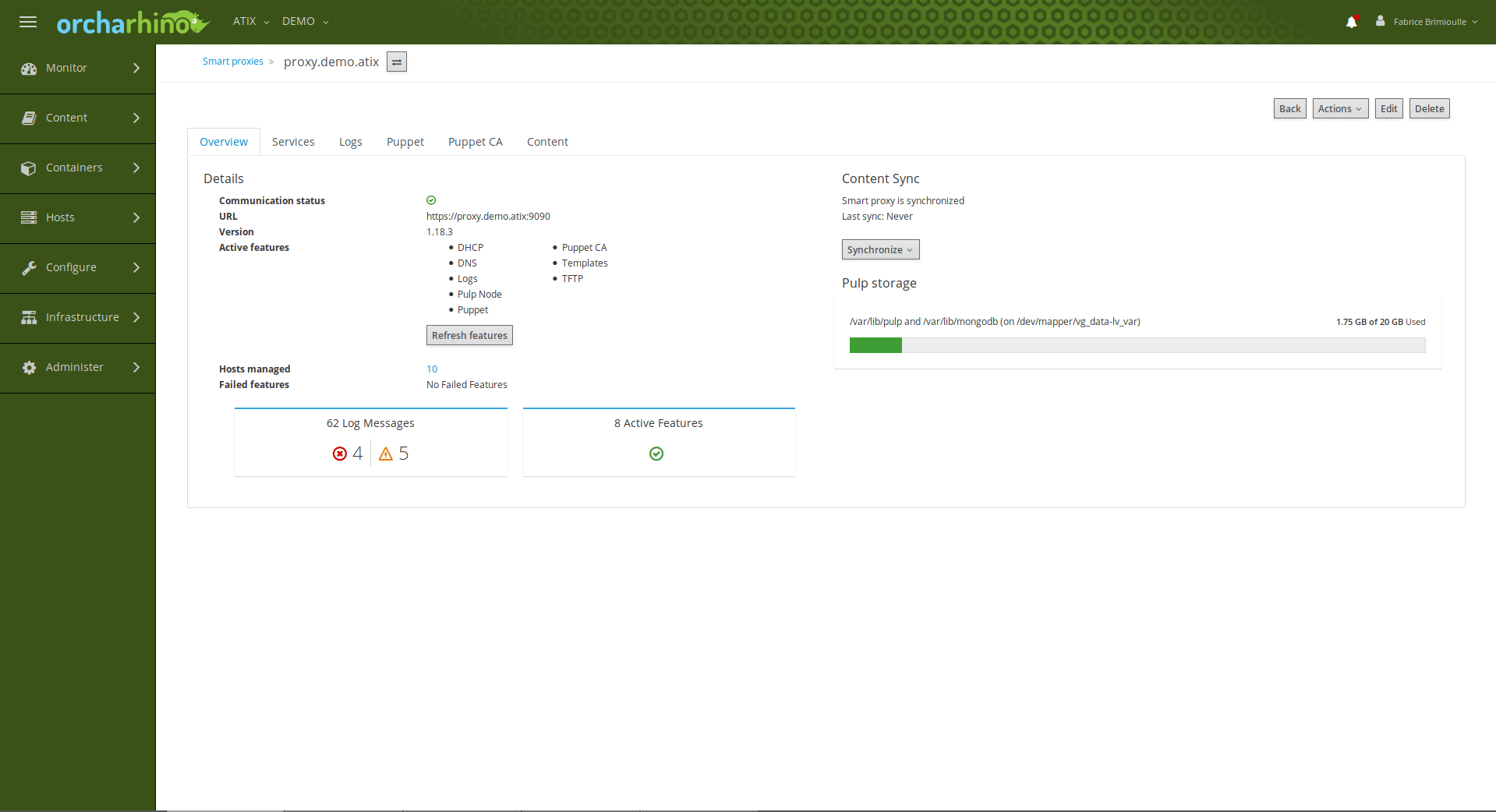

But consider this: Servers in different branch offices or in isolated network segments (DMZ) should be managed centrally with strongly limited access to the outside. This is where the orcharhino proxy comes in.

This acts like a “contact parter” for orcharhino. There are now two options for further action: By default, the host repositories are mirrored directly on all proxies. Since each host directly addresses the subnet of the corresponding proxy, high traffic to and from orcharhino is avoided. For servers within a DMZ, only one exception in the firewall is required to ensure communication between the subnet and orcharhino.

However, if many subnets are in use, there is a risk that resource space will be scarce. In such a case, the identical content must often be mirrored to different locations. However, this can be bypassed by configuring the proxy to pass requests from the hosts directly to orcharhino. This functions as follows:

1.) Installation:

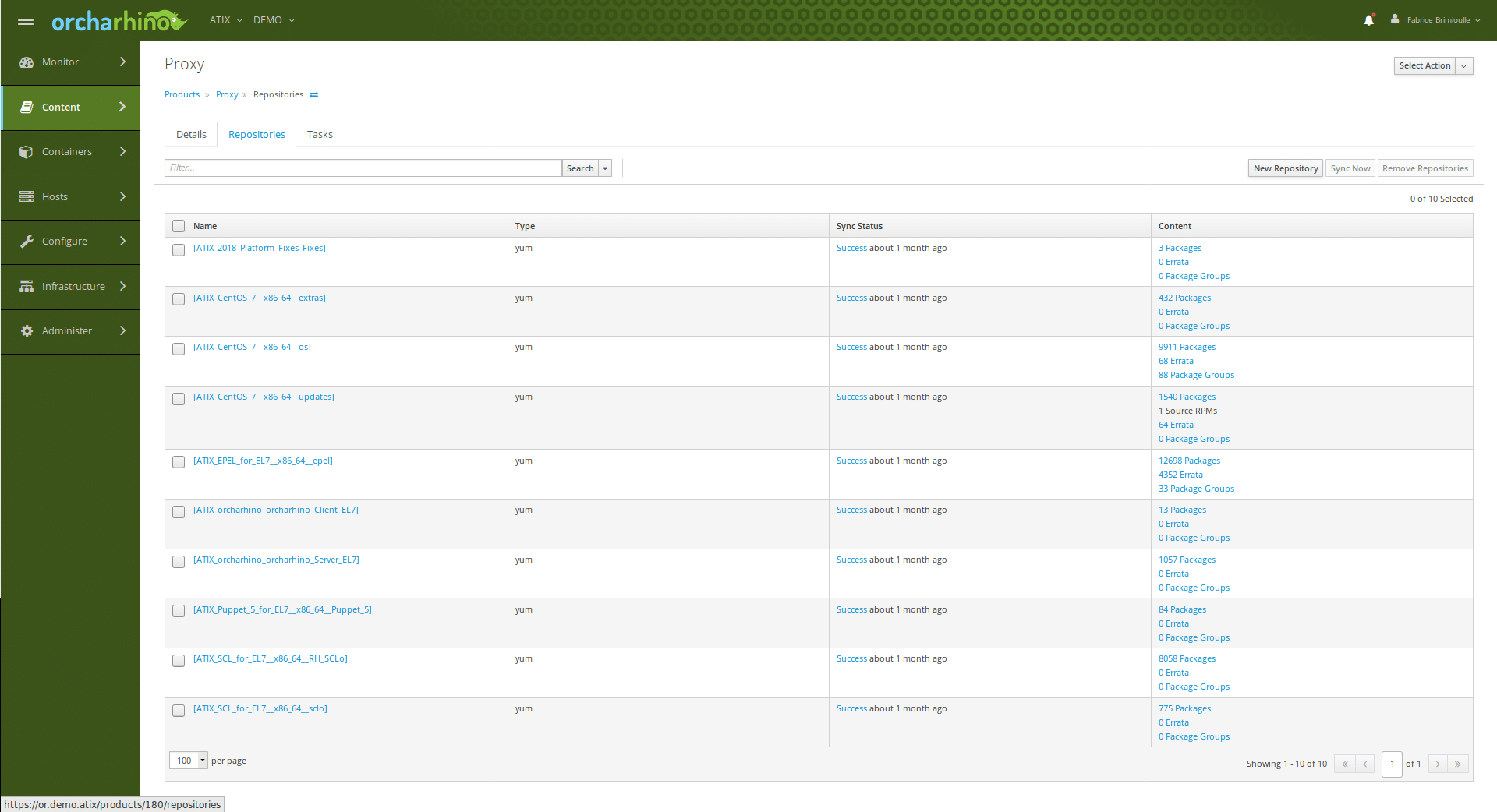

First, a virtual CentOS machine is deployed in the restricted network (e.g. via VMWare or directly via orcharhino). The proxy is set up on this machine. The next step is to install the orcharhino packages. To get them, the appropriate point must be created on the orcharhino and the repositories synchronized:

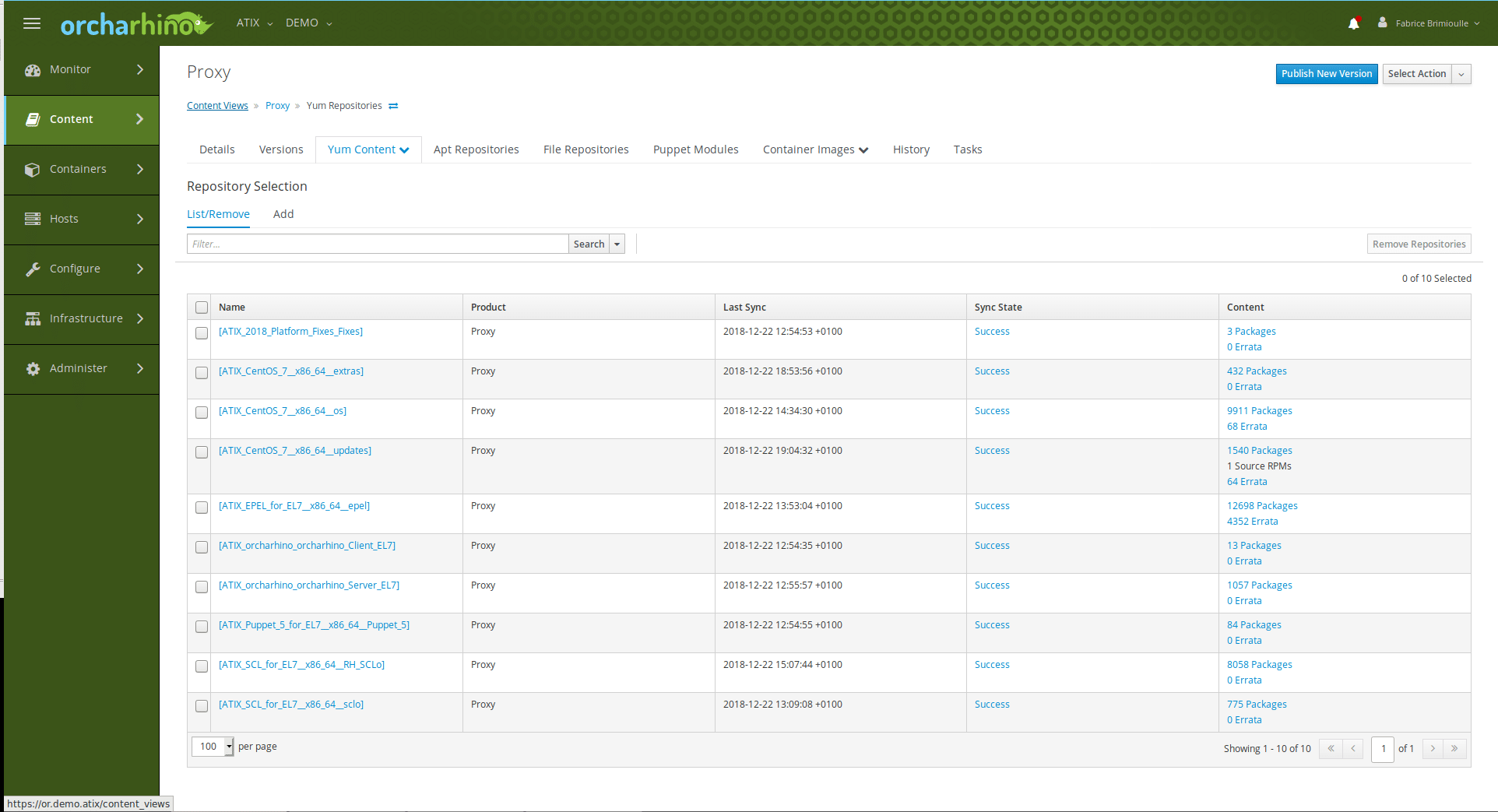

The Content View is now created from this:

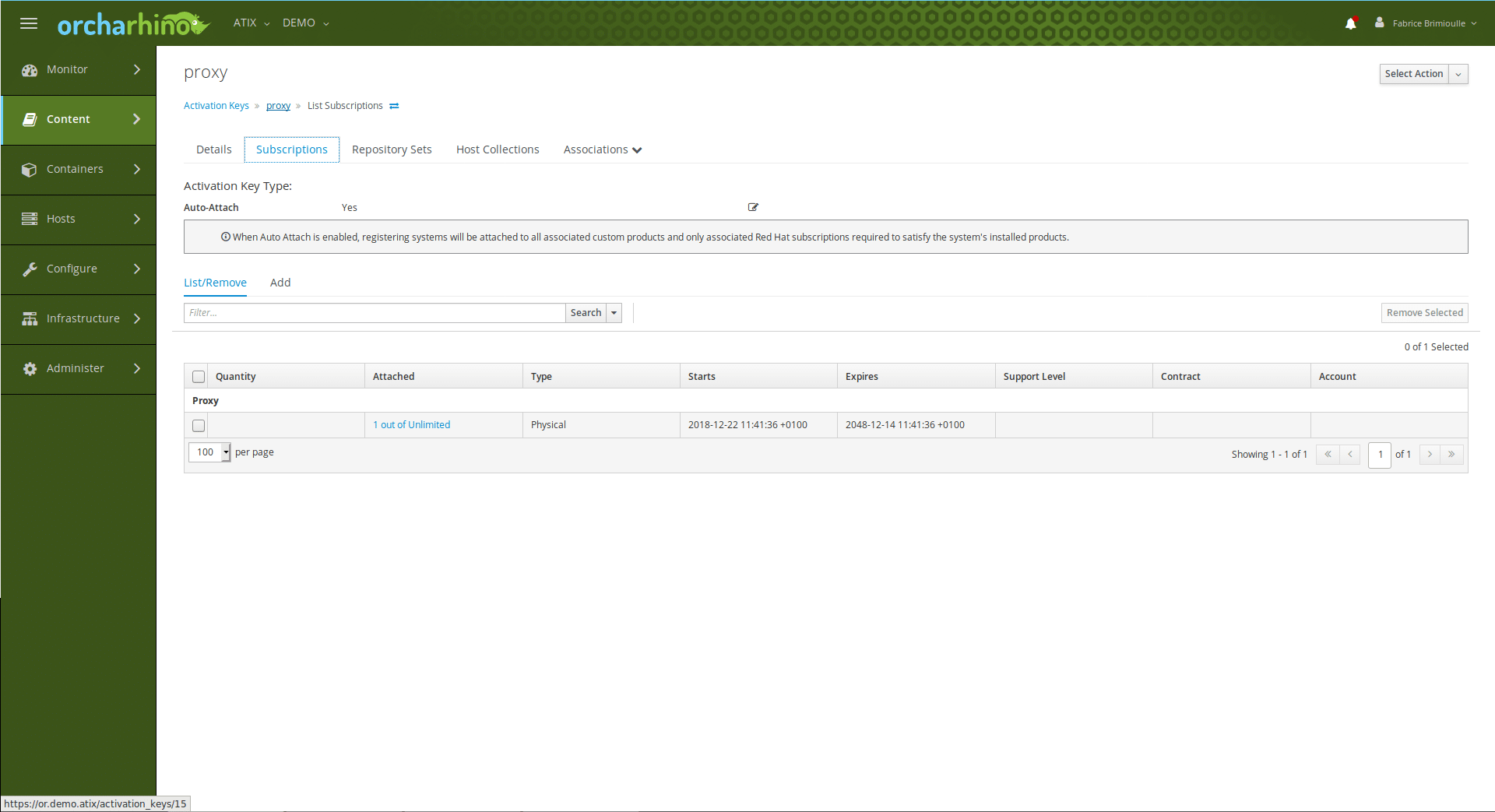

and Activation Key as well:

The next step is to make sure that the proxy knows the name of the proxy. There are two ways to do this. Either you enter the orcharhino with name and IP address in the /etc/hosts or you write the DNS in which the orcharhino is registered to the /etc/resolv.conf. The proxy host is then registered on orcharhino with the Activation Key. With the command

yum -y -t install orcharhino

the orcharhino packages are installed

Once the packages are installed, the proxies will continue to be set up. To avoid error messages, it must be ensured that orcharhino knows the name of the new proxy. To do this, the proxy simply has to be entered into the DNS. Now the certificate for the proxy can be generated in the orcharhino console:

foreman-proxy-certs-generate --foreman-proxy-fqdn "" --certs-tar "~/-certs.tar"

This is then copied to the proxy. In the next step, the installation command for the proxy content is executed on the console of the proxy using the foreman-installer. Conveniently, the commands for copying and installing on the orcharhino appear immediately after the certificate is generated. For smooth communication between orcharhino, proxy, and the hosts, the installer must add the -enable-foreman-proxy-plugin-pulp parameter. Although the proxy is not yet fully configured, it is now enabled.

2.) Configuration:

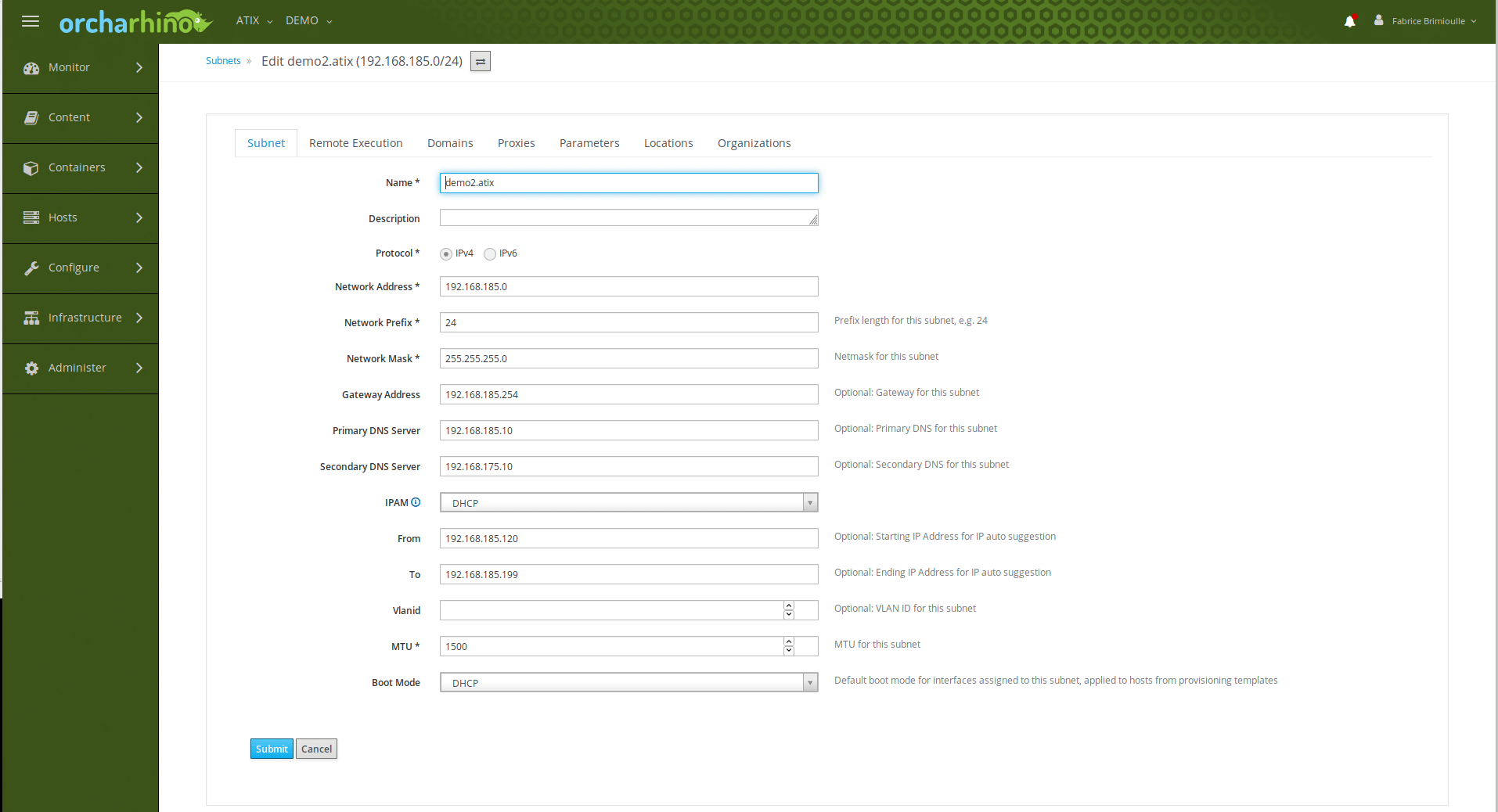

In the next step, the subnet must be created in orcharhino.

The proxy is now assigned to the organization and location, but not to a lifecycle. To mirror the content on the proxy, it must first be synchronized.

If there is not enough storage space available for the synchronization, you must specify in the settings that the hosts connected via the proxy do not receive their packets directly from the proxy server, but via the proxy server from orcharhino. This is very easy by configuring the proxy server service squid (which is already installed and started when installing the proxy content).

In both cases shown, the hosts in that subnet are registered on the proxy rather than on orcharhino. However, if the packets are not obtained from the proxy, but from orcharhino, you must additionally specify orcharhino itself as the source as the baseurl for the package supply.

subscription-manager register –baseurl=/pulp/repos --org=ATIX --activationkey=centos7-lib

Finally, the package manager has to be informed about this.

Thus different networks and zones are no problem for an administration with orcharhino. They can be supplied with orcharhino without having to create new firewall rules for each host. In addition, a proxy does not have to be just a proxy, it can also be a Puppet Master, DNS or DHCP server in the appropriate zones, or perform other tasks.

Fabrice Brimioulle

Latest posts by Fabrice Brimioulle (see all)

- Migrating CentOS 8 to Rocky Linux 8 or AlmaLinux 8 using orcharhino - 11. August 2022

- The Tentacles of orcharhino – Deploying with Many Subnets - 6. September 2019